What this is

This a VST3 plug-in (on Windows/macOS). An Audio Unit version is also available for macOS.

It converts audio from most stereo and surround formats to binaural (stereo for headphone listening) with head tracking, so that virtual speakers are correctly positioned and stay locked to your environment as you move your head.

You can use it to watch films, listen to music, or get a perspective when you’re working with immersive audio. It will work natively at any sample rate from 44.1kHz to 192kHz.

Why it exists

Mostly this is another reason to buy a Supperware Head Tracker, as for £78 you’ll have a good off-the-shelf system for listening to many kinds of VR audio. But also:

- It demonstrates what the head tracker can do when it’s harnessed to a decent rendering engine (and others exist: follow the link above for a fuller list);

- It’s a serious case study for our open-source Head Tracker C++/JUCE API;

- It’s a reasonably novel approach to spatialising technology that may end up in other things.

Installation and use

You’ll want to download one or more of these, depending on your operating system:

- Windows VST3

Unzip and copy into C:\Program Files\Common Files\VST3 - macOS VST3

Unzip and copy into ~/Library/Audio/Plug-Ins/VST3 (hold down the ‘Alt’ key in Finder’s ‘Go’ menu to get to Library the easy way). - macOS Audio Unit

Unzip and copy into ~/Library/Audio/Plug-Ins/Components (hold down the ‘Alt’ key in Finder’s ‘Go’ menu to get to Library the easy way).

When you run a digital audio workstation, it will find the plug-in automatically.

In use

You’ll need three things:

- A digital audio workstation that can run VST3 plug-ins, and preferably can open a video window (Pro Tools, Logic, Reaper, Tracktion …)

- Something to listen to: a movie file in MKV or MP4, for example, or just an audio file with between 2 and 16 channels.

- The Supperware Head Tracker (optional, but it’s kind of the point).

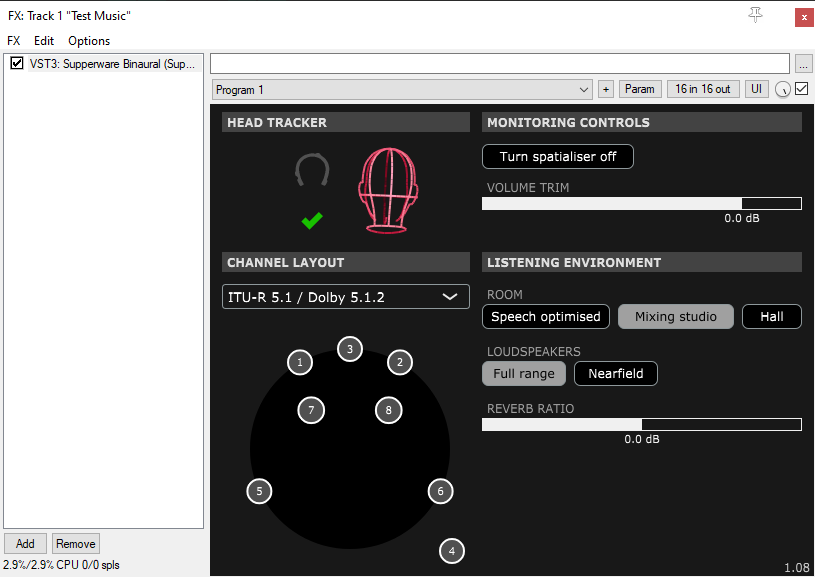

Once the head tracker is plugged in, click the green tick to connect it to the plug-in. (If the tick doesn’t turn green and the animated head appear when you click it, make sure that something else hasn’t taken exclusive control of the head tracker. For example, Bridgehead will do this. You don’t need Bridgehead when you run the plug-in: you’ll need either to close it or to disconnect from it.)

You can double-click on the head whenever you want to re-centre it. The whole panel works like a miniaturised version of Bridgehead, including the little icon to the top-left of the head that opens a version of the tracker settings panel.

Working in real-time

There are plenty of tutorials online that will help you to reduce round-trip delay so that the audio doesn’t lag behind your head movements. Apply the same tricks that musicians use when they’re overdubbing. Most of this comes down to:

- Turning down the buffer size in your audio settings to be as low as your system can bear;

- Using ASIO on Windows if your audio device supports it.

It will be more sluggish than the hardware headphone amp we made during lockdown with its 2-millisecond buffers, but that’s personal computers for you.

One-paragraph user manual

Drag an audio or video file of interest into, say, Reaper, and open the video window (Shift+Ctrl+V in Reaper). Open the ‘FX’ window for the track you’re working with, and add the Supperware Binaural plug-in. Inside the plug-in, select the correct layout (almost all of these will work with two-channel stereo). Click the green tick to connect to the head tracker. Double-click on the animated head to zero it when you’re facing forwards. To configure it, click the little head tracker icon in the plug-in window (this does most the things that Bridgehead can do). If you want to experiment with the environments and speakers, start pressing other buttons. The different reflection patterns and intensities are tuned to different kinds of content. The audio might click slightly when you change between them: it’s a prototype and it’s free.

Button-by-button instructions

Turn spatialiser off: select this to listen to the unprocessed audio channels. Left channel goes to left ear, right channel goes to right ear. In surround modes, the other channels are mixed in too, so you can hear the dialogue and surround speakers, but it’s just straightforward level panning with no spatialisation.

The channel layout: Select different loudspeaker layouts from the drop-down box. The plan view in the bottom left shows where the speakers are located.

- Click on these speakers to solo them.

- Shift+click will allow you to solo more than one at once.

- Ctrl+click will mute a channel (if it’s not soloed).

- Alt+click will allow you to solo (and Alt+Ctrl+click to mute) the frontal, rear, or elevated speakers all at once.

- Dragging somewhere on the background of this plan view will rotate it, so you can see which channels (if any) are elevated.

The plug-in doesn’t do Ambisonics rendering, as there’s no universally-agreed way of doing that, but you can audition Ambisonics by converting it into a fixed-speaker format using a specialised decoder. IEM and Sparta have free decoders in their suites.

Reverb ratio slider : Customise your room! Fully left is almost entirely direct sound; fully right is almost entirely early reflections and reverb. Manipulating this control feels a little like moving the speakers nearer or further away. The 0dB setting is balanced to sound correct.

Room : Changes the reflections and reverb to put you in different environments. Speech-optimised is quite close and dry: good for drama/podcasts/close work, but a bit too ‘analytical’ for most music. Mixing studio is a good general-purpose setting with a bit of comfort ambience, and simulates loudspeakers at about 2.5 metres away. Hall is great for enjoying classical music because it provides the auditory cues for a larger, live room.

Loudspeakers : The ‘Nearfield’ option employs virtual loudspeakers with a frequency response of 100Hz–16kHz, and affects the tonal quality of the reverb so it sounds more like monitoring on NS-10M or LS3/5a-sized speakers.

Chuck any feedback to Supperware in any form (such as the contact form at supperware.co.uk) and it’ll be gratefully received. We might even fix the bugs. Thanks!

Listening to YouTube / games / a musical instrument / Internet radio …

If you’re using a Mac, you can use a third-party program such as menuBUS or Audio Hijack to listen to your computer audio through this plug-in. This lets you listen to anything you can play (games, radio, YouTube) through the spatialiser, rather than being tied to a digital audio workstation.

Otherwise, we’re not sure what you should do, which is one reason why we built a few of these in hardware during 2020. They’re two-channel only and a few versions behind this, but you can listen to anything spatialised with them. If you’re desperate to hear one, drop us an email.

Getting the source code

The spatialiser plug-in is free but not open-source, as Supperware needs to eat occasionally. The head tracker API (the part of this plug-in that connects the head tracker to the spatialiser) is open-source though: you can use it to build your own scene rotator or experiment with the head tracker in other ways. That part is here.

Version history

v1.15 (2023-12-01)

- fixed a stupid bug in the new profile.

v1.14 (2023-11-29)

- added Cubase/Microsoft 7.1.4 layout with the back and side channels exchanged with respect to Dolby’s layout (see the ‘Important’ note here).

v1.13 (2023-09-11)

- 5.1 film layout added by request.

- refactoring and GUI revisions brought in from Bridgehead 1.21.

v1.12 (2023-03-27)

- octagonal channel layouts added by request.

v1.11 (2022-11-24)

- holding down ‘Alt’ over the channel layout panel allows the user to solo/mute front, rear, and height channels as groups.

- head-api fix: can now change the animated head view by dragging with a mouse, like Bridgehead.

- head-api fix: compass and slow central pull weren’t turning off properly.

v1.10 (2022-11-06)

- fixed a crash on initialisation.

v1.09 (2022-11-03)

- fixes to make the plug-in work properly up to 192kHz sampling frequency.

- tuned reverb profiles slightly.

v1.08 (2022-11-03)

- bug fix: race condition caused an occasional crash when changing profiles.

- bug fix: erroneous eight-channel limit when invoking plug-in for the first time.

- bug fix: rendering the Auro-3D top centre channel could cause all audio to cut out.

- bug fix: a path-length error in room reflection calculations caused by fat fingers.

- added SPS-8 and SPS-12 layouts.

- tidied up mouse-hover hints in channel layout window.

- z-buffered the channel layout window.

v1.07 (2022-11-01)

- bug fix: no longer distorts when used with larger buffer sizes.

- bug fix: storage and recall of reverberation levels.

- plan view added with ability to change layouts.

- updated room profiles and improved the sound.

- small optimisations for performance.

v1.06 (2022-06-11)

- settings panel updated to reflect 0.65 firmware revisions.

v1.05 (2022-06-04)

- plug-ins now signed and notarized on macOS.

- algorithm: adjusted interaural timings again after comparing with new ITD tables and competing plug-ins.

- algorithm: remodelled EQ (now simpler).

- algorithm: adjusted reverb ratio so that smaller rooms are now slightly more reverberant by default.

- bug fix: now manages storage and recall of its full status properly.

v1.04 (2021-09-20)

- adjusted tracker panel to match latest firmware

- slightly adjusted interaural timings.

- fixed assertions and memory allocation bugs.

v1.03 (2021-08-02)

- UI: direct/reverberant slider added.

- algorithm: better EQ so that direct sound is flatter and balanced to a better level.

- algorithm: bug fixes in filters and other places.

- algorithm: bypass mode now does a proper surround downmix, and solos work inside it.

- algorithm: filters and delays are reset as a ‘panic’ mode when changing rooms or releasing bypass mode.

- algorithm: improved the efficiency of the elastic delay.

- bug fix: v1.02 lost the ability to work in 5.1 mode on macOS.

v1.02 (2021-07-23)

- algorithm: elevated cues weren’t working properly for various reasons, and detrimentally affected other positioning in a subtle way.

- algorithm: improved elevated cues as well as actually fixing them.

- algorithm: stability improvements when buffer size turns out to be an odd number.

v1.01 (2021-07-21)

- UI: more distinctive contrast between button ‘on’ and ‘off’ colours.

- UI: reflects status of plugin properly when window is closed and reopened.

- UI: snazzy new head tracker control module, with a configuration window.

- algorithm: new fluid delay, to get rid of commutative clicking.

- algorithm: added gain steering, so reverb comes from slightly behind.

- algorithm: conceptual separation of direct and early reflections.

- algorithm: enhanced and rethought reverb.

- algorithm: level boost in EQ removed as it clipped stuff.